Deep Learning | GAI God

Deep learning models process information through successive layers of interconnected 'neurons,' enabling them to learn complex representations and perform…

Contents

Overview

The conceptual seeds of deep learning were sown in the mid-20th century with early work on artificial neural networks. The field saw a resurgence in the 1980s with the development of backpropagation algorithms, popularized by Geoffrey Hinton, Yann LeCun, and Yoshua Bengio, enabling the training of deeper networks. Significant breakthroughs in the 2000s and 2010s, driven by increased data availability (e.g., ImageNet) and parallel processing capabilities via GPUs, propelled deep learning into the mainstream, with landmark achievements like AlexNet's victory in the ImageNet competition solidifying its dominance.

⚙️ How It Works

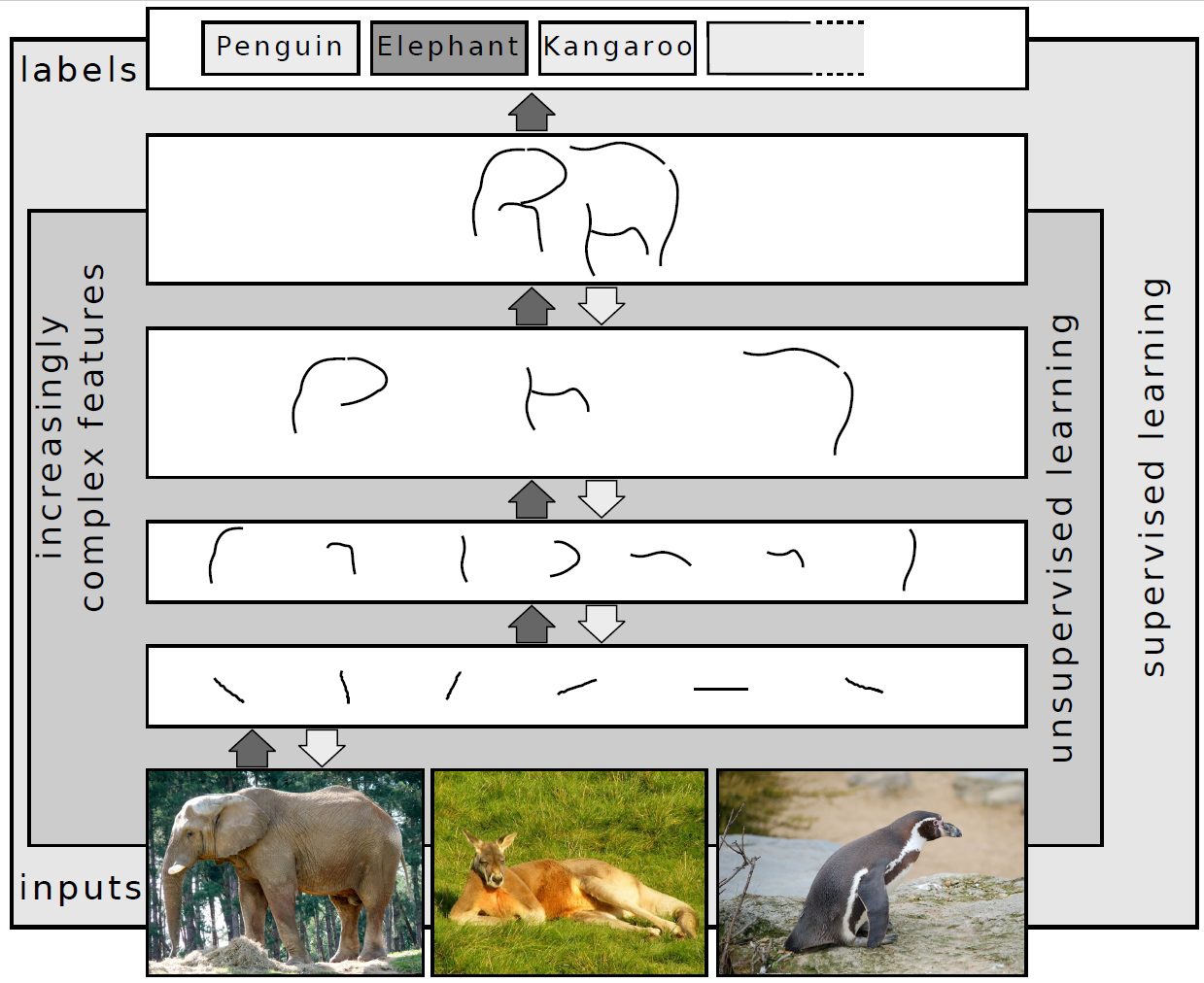

Deep learning models function by processing data through artificial neural networks composed of multiple layers of interconnected nodes, or 'neurons.' Each neuron receives input, applies a weighted sum, and passes the result through an activation function to produce an output. In a deep network, data flows sequentially through these layers: an input layer receives raw data, followed by one or more 'hidden' layers that progressively extract more complex features, and finally an output layer that produces the desired result (e.g., a classification or prediction). The 'deepness' refers to the number of hidden layers, which allows for hierarchical learning—early layers might detect edges or textures, while later layers recognize objects or concepts. Training involves adjusting the weights between neurons using algorithms like backpropagation to minimize a loss function, effectively teaching the network to make accurate predictions based on labeled or unlabeled data.

📊 Key Facts & Numbers

The scale of deep learning is staggering: models can contain billions of parameters, such as Google's PaLM 2. Training these models requires immense computational resources, with large-scale training runs costing millions of dollars in cloud computing fees. The ImageNet dataset, a benchmark for image recognition, contains over 14 million images, and training models on it can take weeks. The global AI market, heavily driven by deep learning, was valued at approximately $150 billion in 2023 and is projected to exceed $1.3 trillion by 2030, according to various market research firms. OpenAI's GPT-4 model, a prime example of deep learning's capabilities, reportedly has over 1.7 trillion parameters, though this figure is unconfirmed.

👥 Key People & Organizations

The 'godfathers' of deep learning are widely recognized as Geoffrey Hinton, Yann LeCun, and Yoshua Bengio, who shared the Turing Award in 2018 for their foundational work. Andrew Ng, co-founder of Coursera and former head of Baidu AI Group, has been instrumental in popularizing deep learning through online courses and advocating for its practical applications. Major tech companies like Google, Meta, Microsoft, and NVIDIA heavily invest in deep learning research and development, employing thousands of researchers and engineers. Organizations such as the Malaria Detection Challenge and NIH leverage deep learning for scientific advancement, while startups like OpenAI and Anthropic push the boundaries of generative AI.

🌍 Cultural Impact & Influence

Deep learning has profoundly reshaped culture and society, moving AI from science fiction to everyday reality. It powers features like personalized recommendations on Netflix, voice assistants like Amazon Alexa and Apple Siri, and sophisticated image filters on platforms like Instagram. The ability of deep learning models to generate realistic text, images, and even music has sparked both excitement and concern, leading to new forms of creative expression and ethical dilemmas. The proliferation of AI-generated content, from deepfakes to AI art, has raised questions about authenticity, copyright, and the future of human creativity. Deep learning's influence extends to news dissemination, political discourse, and even the job market, prompting widespread societal adaptation.

⚡ Current State & Latest Developments

The current landscape of deep learning is characterized by rapid advancements in large language models (LLMs) and generative AI. Models like GPT-4, Google Bard, and Claude are demonstrating increasingly sophisticated natural language understanding and generation capabilities. Research is also focusing on more efficient training methods, smaller model sizes for edge devices, and improved interpretability of DL models. The development of multimodal AI, capable of processing and generating information across text, images, audio, and video, is a major trend. Companies are racing to integrate these advanced DL capabilities into their products and services, leading to a dynamic and competitive market in 2024.

🤔 Controversies & Debates

Deep learning faces significant controversies, primarily concerning ethical implications, bias, and environmental impact. Bias in training data can lead to discriminatory outcomes in AI systems, as seen in facial recognition technology that performs poorly on certain demographics. The immense computational power required for training large models raises concerns about their carbon footprint, with some studies estimating the training of a single large DL model can emit as much carbon as five cars over their lifetime. Furthermore, the 'black box' nature of many deep learning models makes it difficult to understand their decision-making processes, leading to challenges in accountability and trust, especially in critical applications like healthcare and finance. The potential for misuse, such as in generating misinformation or autonomous weapons, also fuels debate.

🔮 Future Outlook & Predictions

The future of deep learning points towards more powerful, efficient, and accessible AI. Researchers are exploring novel architectures, such as Graph Neural Networks and Transformers, to tackle increasingly complex problems. A key frontier is achieving Artificial General Intelligence (AGI), though this remains a distant and debated goal. Efforts are underway to develop 'explainable AI' (XAI) to address the interpretability issue, and 'green AI' to reduce the environmental cost of training. We can expect deep learning to become more integrated into scientific discovery, personalized medicine, and complex system management. The democratization of DL tools and pre-trained models will likely enable wider adoption across industries and by individual creators.

💡 Practical Applications

Deep learning's practical applications are pervasive. In computer vision, it powers autonomous vehicles, medical image analysis for disease detection (e.g., identifying cancerous tumors in MRI scans), and security surveillance. For natural language processing, DL enables machine translation, sentiment analysis, chatbots, and content generation tools like Jasper AI. In healthcare, it aids in drug discovery and personalized treatment plans. Recommendation systems on platforms like YouTube and Amazon rely heavily on DL to predict user preferences. Financial institutions use DL for fraud detection, algorithmic trading, and credit scoring. Scientific research benefits from DL in areas like climate modeling, particle physics, and materials science.

Key Facts

- Category

- technology

- Type

- topic